- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Where can i download final cut pro 7

- Free jailbreak apps

- Protoss in lab quotes starcraft 2 campaign

- Download spark with hadoop linux

- Mail app for mac how to zoom in

- Tamil story books pdf free download

- Laser color printers for mac

- Unlock the world with free serials

- Street fighter 2 mugen stage pack

Your public key has been saved in /home/hadoop/.ssh/id_rsa.pub Your identification has been saved in /home/hadoop/.ssh/id_rsa Just press Enter to complete the process: Generating public/private rsa key pair.Įnter file in which to save the key (/home/hadoop/.ssh/id_rsa):Įnter passphrase (empty for no passphrase): Next, run the following command to generate Public and Private Key Pairs: ssh-keygen -t rsa Next, you will need to configure passwordless SSH authentication for the local system.įirst, change the user to hadoop with the following command: su - hadoop Step 3 – Configure SSH Key-based Authentication Įnter the new value, or press ENTER for the default Īdding new user `hadoop' (1002) with group `hadoop'. Provide and confirm the new password as shown below: Adding user `hadoop'. Run the following command to create a new user with name hadoop: sudo adduser hadoop It is a good idea to create a separate user to run Hadoop for security reasons. OpenJDK 64-Bit Server VM (build 11.0.11+9-Ubuntu-0ubuntu2.20.04, mixed mode, sharing) OpenJDK Runtime Environment (build 11.0.11+9-Ubuntu-0ubuntu2.20.04) You should get the following output: openjdk version "11.0.11" Once installed, verify the installed version of Java with the following command: java -version

#DOWNLOAD SPARK WITH HADOOP LINUX INSTALL#

You can install OpenJDK 11 from the default apt repositories: sudo apt update sudo apt install openjdk-11-jdk Hadoop version 3.3 and latest also support Java 11 runtime as well as Java 8. Hadoop is written in Java and supports only Java version 8.

#DOWNLOAD SPARK WITH HADOOP LINUX HOW TO#

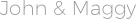

This tutorial will explain you to how to install and configure Apache Hadoop on Ubuntu 20.04 LTS Linux system. It has four major components such as Hadoop Common, HDFS, YARN, and MapReduce. It is an ecosystem of Big Data tools that are primarily used for data mining and machine learning.Īpache Hadoop 3.3 comes with noticeable improvements and many bug fixes over the previous releases.

It uses HDFS to store its data and process these data using MapReduce. Hadoop is a free, open-source, and Java-based software framework used for the storage and processing of large datasets on clusters of machines.